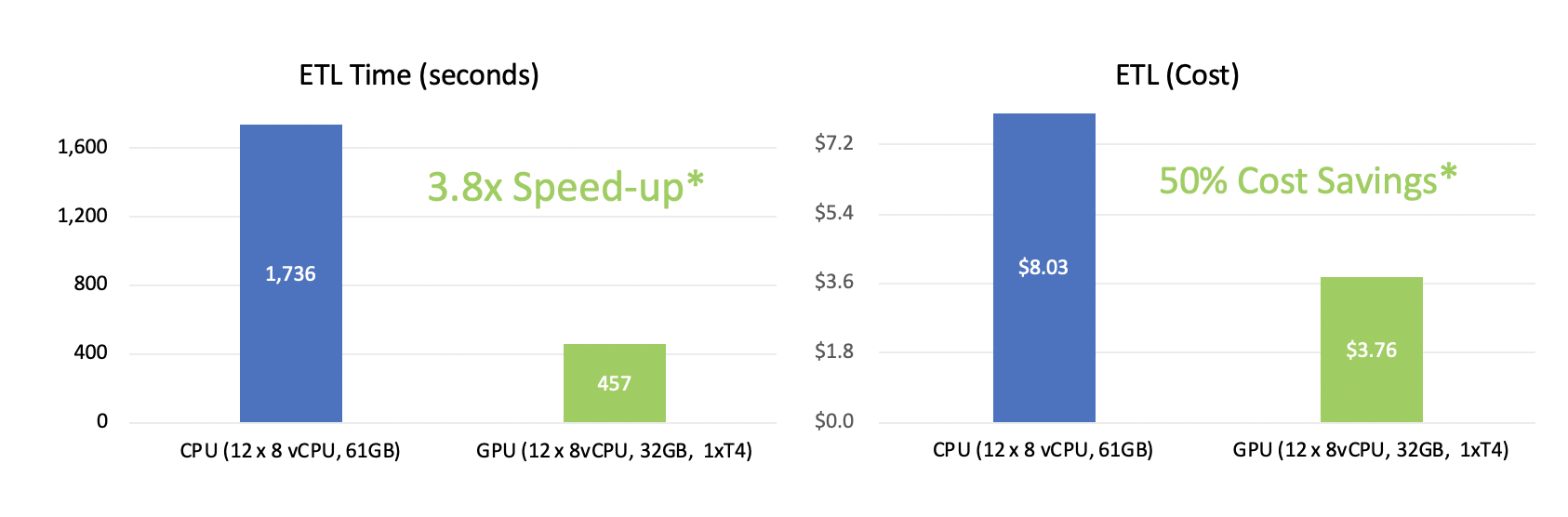

Accelerate Distributed Deep Learning - Implementing GPU Accelerated Apache Spark 3.0 in Cisco Data I... - Cisco Community

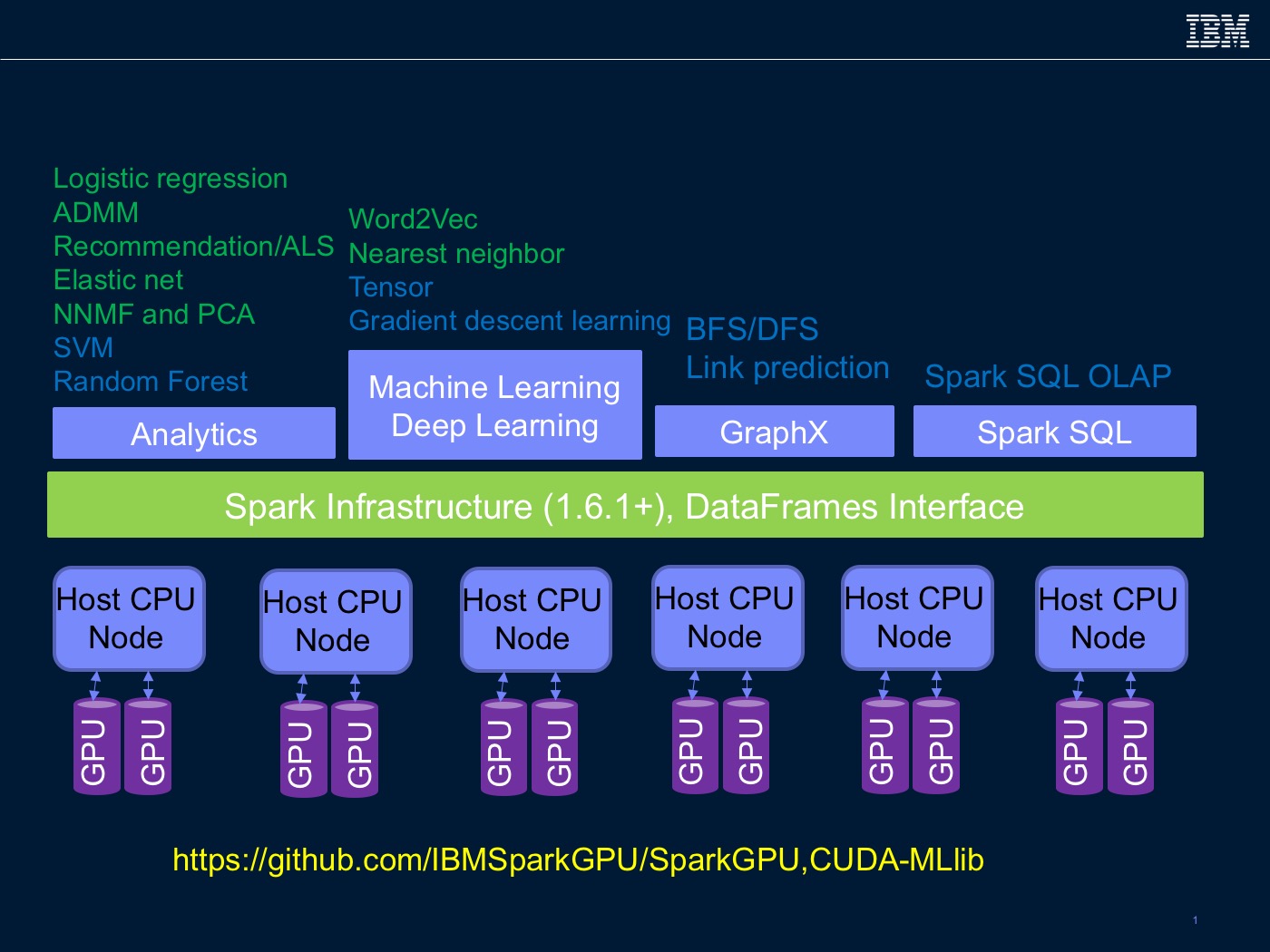

GitHub - IBMSparkGPU/SparkGPU: GPU* or SPARK* branches are used for generating GPU code in Tungsten/concact:@kiszk, MLlib branch is used for CUDA-MLlib project/concact:@bherta

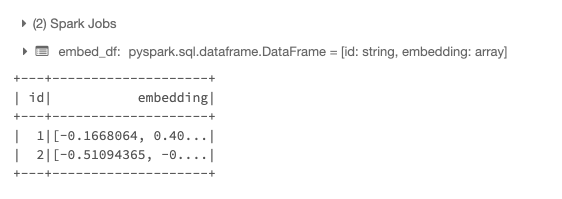

High-performance Inferencing with Transformer Models on Spark | by Dannie Sim | Towards Data Science

High-performance Inferencing with Transformer Models on Spark | by Dannie Sim | Towards Data Science

/filters:no_upscale()/articles/deep-learning-apache-spark-nvidia-gpu/en/resources/56image003-1623166194881.jpg)